Recently, I presented a workshop on my campus titled “Crafting Personalized Online Learning Experiences with VoiceThread.” The main objective of my workshop was to showcase the effectiveness of VoiceThread as a “learning space” for students to share thoughts through voice and video comments, rather than text based comments alone as is the typical type of communications integrated into learning management systems.

The workshop was schedule for the end of the quarter and it was a quarter that was peppered with furloughs and a very low moral. I was not expecting a big turnout. However, I was hoping for at least a small group. What resulted, however, was one of those fortuitous experiences in life that one comes to covet and reflect deeply upon. I hope the story touches you in some special way, as it has touched me.

As I had expected, the faculty turnout for the workshop was small — there was only one person who showed up. But this faculty member brought something very unique to my workshop. She was deaf. And she was there to learn about VoiceThread. Hmmm.

Her objectives were to identify a way to communicate effectively with her students using video for signing in an online learning environment. That is, she wanted her students to see her AND she wasnted to see her students. I could see her challenges with the Blackboard interface. Blackboard is far from user friendly when trying to integrate video. I understood the challenges of integrating still images alone as an art history professor so the need for a visually-centric learning environment had been a priority for me as well, although we certainly were coming at this from two different angles.

What I found so compelling about our conversation was our discussion about feeling “distanced” from our students with certain forms of communication. While I shared my sense of detachment from my students in an online teaching environment when I could only communicate with them via text, she shared with me that she feels distanced from her own students in class, there with her, when they don’t have effective signing skills or a good interpreter. Wow. That really gave me a pause. I was learning more from this workshop than she would, for sure.

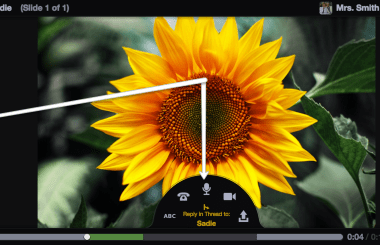

Embedded in my VoiceThread presentation, I had an example that Steve Muth, a Co-Founder of VoiceThread, had shared with me that showcases what I consider an amazingly innovative use of VoiceThread (from my perspective, anyway). What you see below is a VoiceThread that contains two slides. The first slide includes an introductory video comment by Rosemary Stifter. Then click on the “right arrow” icon to go to slide two. There you will view a discussion in which deaf children are empowered to engage in an online dialogue using sign language, rather than being required to type their thoughts. Imagine the liberating potential of this medium…

![]()

Thoughts? Comments?

This is a wonderful example of how expressive sign language is and how important that this method of learning is. Thank you for showing this, I can just imagine just how much of the students comments would have been lost if they had to write there comments instead of signing them. Sign language is just that, a visual language, not a written one.

I am a Bible teacher for the deaf in Springfield, MO.

This is a wonderful tool, thanks again for the great example.

Ps so there is no confusion I am hearing but use the bible in sign language(on DVD) and use sign language myself to teach the deaf the Bible.

timandtiva@gmail.com

This is a step in the right direction, but I work at a University where we design online courses and would like to use Voice Thread but our situation is that we have only one deaf student, and so the other students are not expected to know sign language. We need an up-to-the-minute captioning feature so that the comments from other students are "readable" by our deaf student.

Hi Jen,

VT is working on captioning and transcription options and they'll start with the main media first, and then we'll work towards transcription of commentary. 'Automatic' and instant captioning is probably a long way off because while there are technological solutions that transcribe audio they are not yet accurate enough to provide a really accessible experience by themselves, humans will have to be involved for the last bit of copy editing for some time to come. Of course this means no 'instant' and something more like a 24hour turnaround time. You'll start to see captioning and transcription options from VT later this year.

But one point I'd like to make, and I certainly don't want to sound accusatory, if you could hear my voice you'd understand that there's none of that present;-) Accessible technology tools are used in inaccessible ways every day. There is, and I'd argue, will never be, a magic wand of accessibility that makes all communications methods highly accessible to all of the different types of people in this world. When will there be a tool to automatically transcribe grainy web video of young ASL user talking about their ideas, so that they become accessible to a non-signer like me? It will be a long, long time. I'd argue that this is not the end of the world and that until the magic wand arrives we all need to simply keep accessibility at the forefront of our personal interactions every day, constantly shifting the way we interact to be more friendly to other people's needs. As a non-signer, when I interact with the faculty at Gallaudet University they accommodate me by not using ASL and instead stick to text and when I communicate to them I don't place a call or use an audio comment in a VT. I don’t believe this is a great burden on either of us and it’s a solution that involves zero technology.

But VT is a technology tool and so we do have a special obligation, to travel a road where each mile finds us more and more accessible to more and more people. We'll keep plugging away. A good case-in-point is our upcoming iOS app which will be fairly useless to a lot of vision impaired people but it will be godsend to many with cognitive impairments. We'll promise to keep developing versions of the tool that allow more people into the conversational tent rather than taking a least-common-denominator approach that leads to a shared but mediocre collaborative experience. Along the way though we should all not all lose sight of the fact that the greatest accessibility tool of all time is currently available and often unused, and that is us, people and their capacity to empathize, adapt, and accommodate those we work with on a daily basis.

Thanks Jen and thanks Michelle, as usual you make conversations that inspire people to think deeply and to participate. Kudos:-)

-Steve